Chat

Chat is where you actually run an assessment. You pick a playbook (or start blank), select which tool groups the AI is allowed to call, and start a conversation. The AI then plans steps, executes commands on remote agents, and writes findings as insights — all while you watch the transcript stream in.

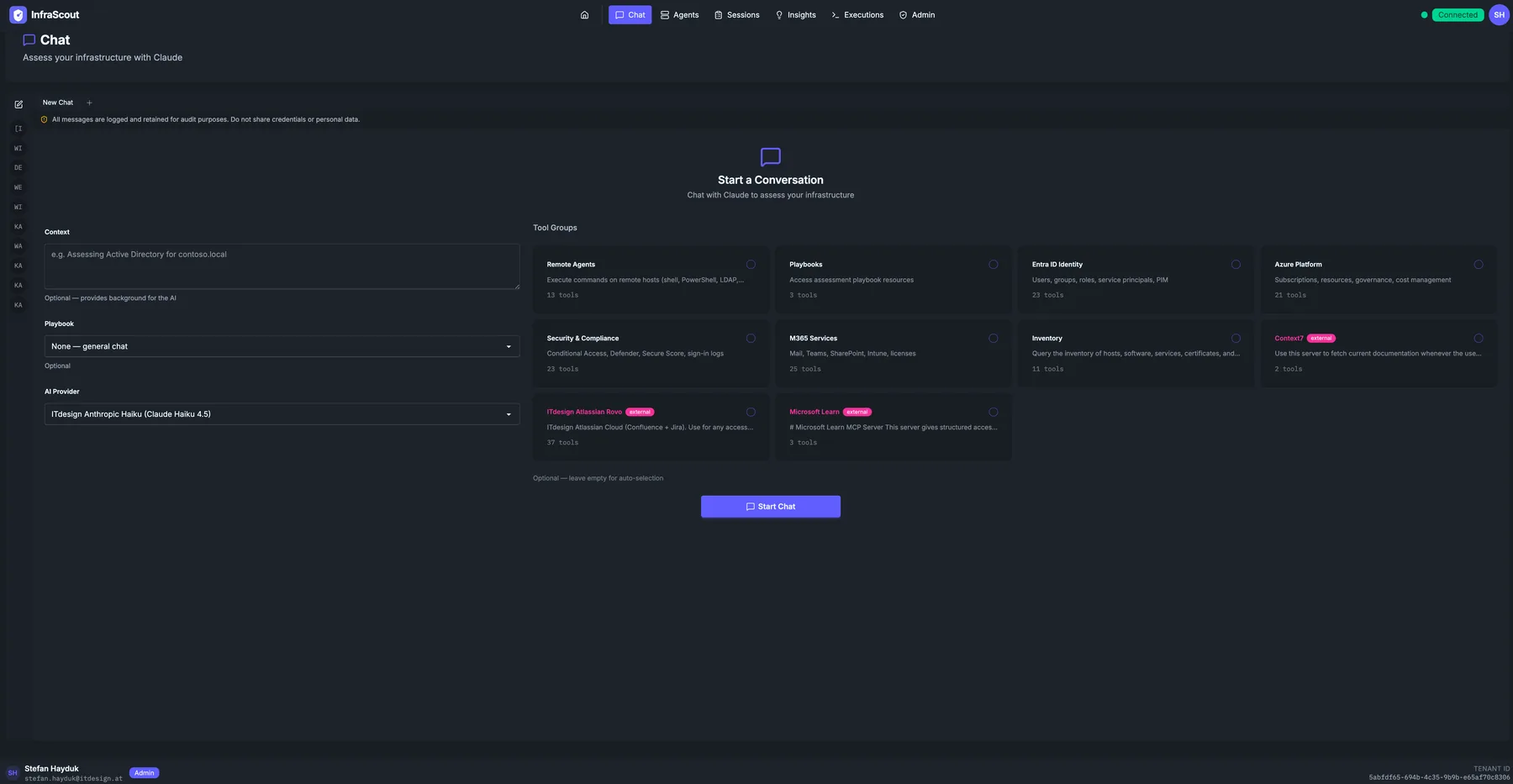

Starting a conversation

The Chat landing screen is a single-form launcher. Until you click Start Chat, no AI request has been made and no remote tool has been invoked.

The left column captures intent:

- Context — an optional free-text note about the engagement (for example, "Assessing Active Directory for contoso.local"). The AI receives it as background; it never reaches a tool unredacted.

- Playbook — choose one of the published assessment playbooks, or leave it on None — general chat for an unscripted conversation.

- AI Provider — which configured Claude model handles the conversation. Defaults to your tenant's default provider.

The right column is the tool catalog. Each card represents a tool group — Remote Agents, Playbooks, Entra ID Identity, Azure Platform, Security & Compliance, M365 Services, Inventory, plus any registered external MCP servers (Context7, Microsoft Learn, ITdesign Atlassian Rovo, and so on). Tick the boxes for the groups the AI is allowed to call this session. Tool groups marked external route through a registered MCP server; the rest are built-in InfraScout tools.

The footer shows the count of selected playbooks and the Start Chat button. Once you press it, the page transitions into the conversation view.

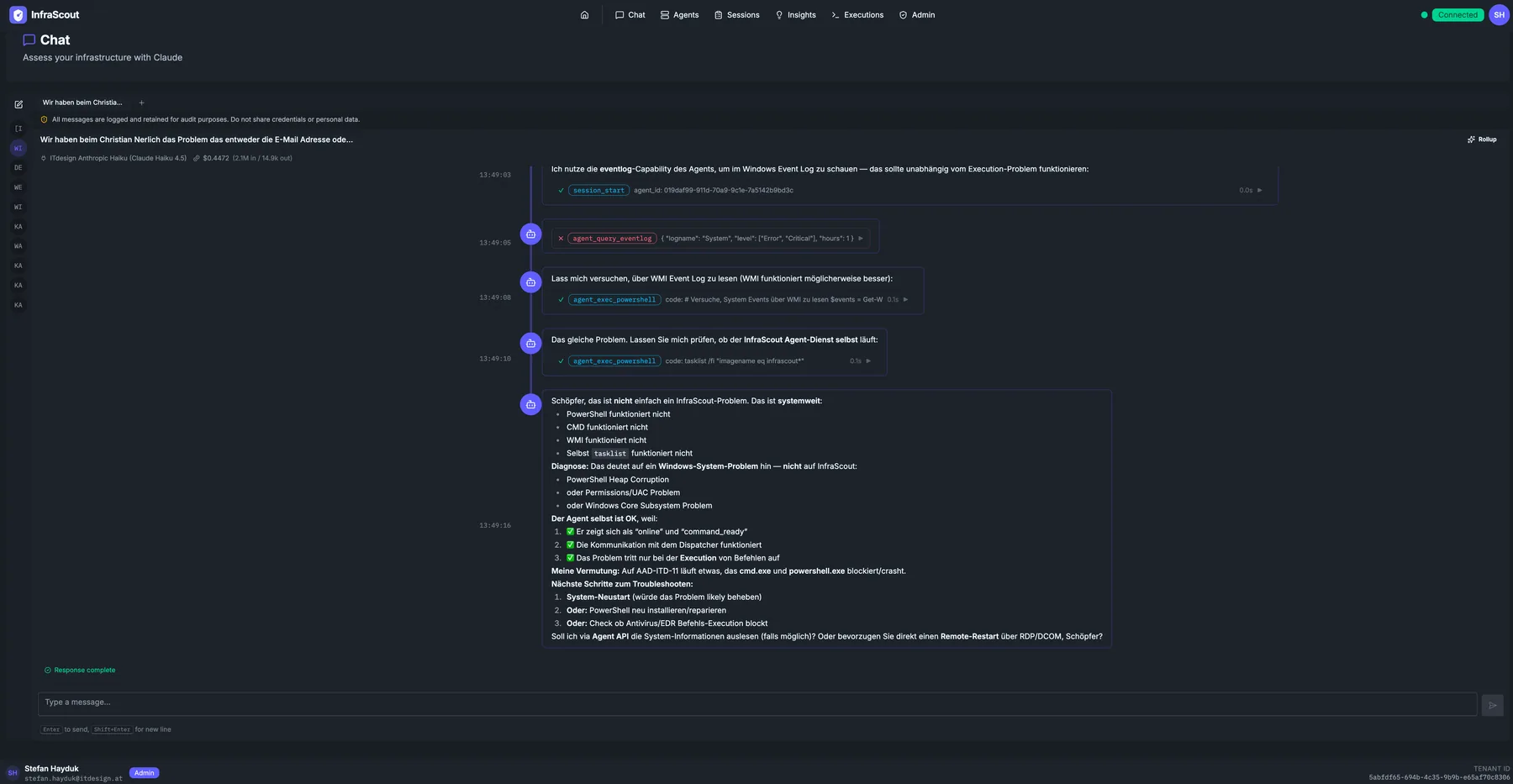

In-conversation view

Once the chat is running, the launcher collapses and the screen splits into the chat sidebar (recent threads), the transcript, and the input box.

A few details worth knowing:

- Tool calls render inline. Whenever the AI calls a tool, the call (with arguments) appears as a collapsible chip directly inside the message stream. Click the chip to expand the full result. The example above shows

execute_shellandagent_query_eventlogcalls against an InfraScout agent. - Costs are shown per turn. A small token count is rendered next to each AI response so you can track spend during long sessions.

- Audit notice. The banner at the top of every chat ("All messages are logged and retained for audit purposes") is a compliance reminder, not optional — every prompt, response, and tool call is captured by the audit pipeline.

- Resume later. The sidebar lists every chat you have started. You can leave a conversation, come back hours later, and continue where you left off. The full transcript persists.

Permissions and visibility

Chats follow the same visibility model as the rest of the portal. Your conversation is private to you by default. Administrators can see chat metadata (start time, user, tool groups used) under Audit & Compliance — Chat Audit; the message bodies are also retained for compliance review.

If a tool the AI tries to call is not in your selected tool groups, the call fails with a clear "tool not enabled for this session" error rather than silently expanding scope mid-conversation.

Common workflows

The fastest way to use Chat is to pick the playbook that matches your goal — AD Assessment Agent, Exchange Server Assessment, PKI and Certificate Infrastructure, and so on — and let the playbook drive the questions. For ad-hoc exploration, leave the playbook blank, select the tool groups you want, and start with a question like "Which domain controllers are running an unsupported OS?".

When a conversation produces a finding worth tracking, ask the AI to save it as an insight. The insight then appears under Insights with the chat as its source.